在 TensorFlow.org 上查看 在 TensorFlow.org 上查看

|

在 Google Colab 中运行 在 Google Colab 中运行

|

在 GitHub 上查看源代码 在 GitHub 上查看源代码

|

下载笔记本 下载笔记本

|

这是 Li 等人 2020 年 3 月 16 日发表的同名论文的 TensorFlow Probability 移植版本。我们在 TensorFlow Probability 平台上忠实地再现了原始作者的方法和结果,展示了 TFP 在现代流行病学建模环境中的部分功能。移植到 TensorFlow 使我们相对于原始 Matlab 代码获得了约 10 倍的加速,并且由于 TensorFlow Probability 普遍支持矢量化批处理计算,因此也能够很好地扩展到数百个独立的复制。

原始论文

Ruiyun Li、Sen Pei、Bin Chen、Yimeng Song、Tao Zhang、Wan Yang 和 Jeffrey Shaman。大量未记录感染促进新型冠状病毒 (SARS-CoV2) 快速传播。(2020),doi: https://doi.org/10.1126/science.abb3221 。

摘要:“估计未记录新型冠状病毒 (SARS-CoV2) 感染的流行率和传染性对于了解这种疾病的总体流行率和流行病潜力至关重要。在这里,我们使用中国报告感染的观察结果,结合流动性数据、网络动态元种群模型和贝叶斯推断,推断与 SARS-CoV2 相关的关键流行病学特征,包括未记录感染的比例及其传染性。我们估计在 2020 年 1 月 23 日旅行限制之前,所有感染中 86% 未被记录(95% CI:[82%–90%])。每人而言,未记录感染的传播率是记录感染的 55%([46%–62%]),但由于数量更多,未记录感染是 79% 记录病例的感染来源。这些发现解释了 SARS-CoV2 的快速地理传播,并表明控制这种病毒将特别具有挑战性。”

Github 链接 到代码和数据。

概述

该模型是一个区室性疾病模型,包含“易感”、“暴露”(感染但尚未具有传染性)、“从未记录的传染性”和“最终记录的传染性”的区室。有两个值得注意的特征:每个 375 个中国城市都有单独的区室,并假设人们如何从一个城市旅行到另一个城市;以及报告感染的延迟,因此在第 \(t\) 天成为“最终记录的传染性”的病例直到随机的后期才出现在观察到的病例计数中。

该模型假设从未记录的病例最终由于较轻而未被记录,因此以较低的速率感染他人。原始论文中感兴趣的主要参数是未记录病例的比例,以估计现有感染的程度以及未记录传播对疾病传播的影响。

此 colab 以自下而上的方式构建为代码演练。按顺序,我们将

- 摄取并简要检查数据,

- 定义模型的状态空间和动力学,

- 构建一套函数,用于按照 Li 等人的方法在模型中进行推断,以及

- 调用它们并检查结果。剧透:它们与论文中的结果相同。

安装和 Python 导入

pip3 install -q tf-nightly tfp-nightly

import collections

import io

import requests

import time

import zipfile

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import tensorflow.compat.v2 as tf

import tensorflow_probability as tfp

from tensorflow_probability.python.internal import samplers

tfd = tfp.distributions

tfes = tfp.experimental.sequential

数据导入

让我们从 github 导入数据并检查其中一些数据。

r = requests.get('https://raw.githubusercontent.com/SenPei-CU/COVID-19/master/Data.zip')

z = zipfile.ZipFile(io.BytesIO(r.content))

z.extractall('/tmp/')

raw_incidence = pd.read_csv('/tmp/data/Incidence.csv')

raw_mobility = pd.read_csv('/tmp/data/Mobility.csv')

raw_population = pd.read_csv('/tmp/data/pop.csv')

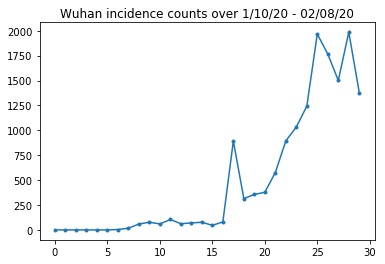

下面我们可以看到每天的原始发病率。我们最感兴趣的是前 14 天(1 月 10 日至 1 月 23 日),因为旅行限制是在 23 日实施的。该论文通过分别对 1 月 10 日至 23 日和 1 月 23 日之后进行建模来解决这个问题,使用不同的参数;我们只将我们的复制限制在早期阶段。

raw_incidence.drop('Date', axis=1) # The 'Date' column is all 1/18/21

# Luckily the days are in order, starting on January 10th, 2020.

让我们对武汉的发病率进行合理性检查。

plt.plot(raw_incidence.Wuhan, '.-')

plt.title('Wuhan incidence counts over 1/10/20 - 02/08/20')

plt.show()

到目前为止,一切顺利。现在是初始人口数量。

raw_population

让我们也检查并记录哪个条目是武汉。

raw_population['City'][169]

'Wuhan'

WUHAN_IDX = 169

在这里,我们看到了不同城市之间的流动矩阵。这是前 14 天不同城市之间人口流动数量的代理。它来自腾讯提供的 2018 年农历新年期间的 GPS 记录。Li 等人将 2020 年期间的流动性建模为某个未知(受推断影响)的常数因子 \(\theta\) 乘以这个。

raw_mobility

最后,让我们将所有这些预处理成我们可以使用的 numpy 数组。

# The given populations are only "initial" because of intercity mobility during

# the holiday season.

initial_population = raw_population['Population'].to_numpy().astype(np.float32)

将流动性数据转换为 [L, L, T] 形状的张量,其中 L 是位置数量,T 是时间步长数量。

daily_mobility_matrices = []

for i in range(1, 15):

day_mobility = raw_mobility[raw_mobility['Day'] == i]

# Make a matrix of daily mobilities.

z = pd.crosstab(

day_mobility.Origin,

day_mobility.Destination,

values=day_mobility['Mobility Index'], aggfunc='sum', dropna=False)

# Include every city, even if there are no rows for some in the raw data on

# some day. This uses the sort order of `raw_population`.

z = z.reindex(index=raw_population['City'], columns=raw_population['City'],

fill_value=0)

# Finally, fill any missing entries with 0. This means no mobility.

z = z.fillna(0)

daily_mobility_matrices.append(z.to_numpy())

mobility_matrix_over_time = np.stack(daily_mobility_matrices, axis=-1).astype(

np.float32)

最后,获取观察到的感染并制作一个 [L, T] 表格。

# Remove the date parameter and take the first 14 days.

observed_daily_infectious_count = raw_incidence.to_numpy()[:14, 1:]

observed_daily_infectious_count = np.transpose(

observed_daily_infectious_count).astype(np.float32)

并仔细检查我们是否按我们想要的方式获得了形状。提醒一下,我们正在处理 375 个城市和 14 天。

print('Mobility Matrix over time should have shape (375, 375, 14): {}'.format(

mobility_matrix_over_time.shape))

print('Observed Infectious should have shape (375, 14): {}'.format(

observed_daily_infectious_count.shape))

print('Initial population should have shape (375): {}'.format(

initial_population.shape))

Mobility Matrix over time should have shape (375, 375, 14): (375, 375, 14) Observed Infectious should have shape (375, 14): (375, 14) Initial population should have shape (375): (375,)

定义状态和参数

让我们开始定义我们的模型。我们正在复制的模型是 SEIR 模型 的变体。在这种情况下,我们有以下随时间变化的状态

- \(S\):每个城市中易感人群的数量。

- \(E\):每个城市中暴露于疾病但尚未具有传染性的人数。从生物学角度来说,这对应于感染疾病,因为所有暴露的人最终都会变得具有传染性。

- \(I^u\):每个城市中具有传染性但未记录的人数。在模型中,这实际上意味着“永远不会被记录”。

- \(I^r\):每个城市中具有传染性且已记录的人数。Li 等人对报告延迟进行了建模,因此 \(I^r\) 实际上对应于类似于“病例严重到足以在将来的某个时间点被记录”的东西。

正如我们将在下面看到的那样,我们将通过在时间上向前运行一个集成调整的卡尔曼滤波器 (EAKF) 来推断这些状态。EAKF 的状态向量是每个数量的城市索引向量。

该模型具有以下可推断的全局、时间不变参数

- \(\beta\):由已记录的传染性个体引起的传播率。

- \(\mu\):由未记录的传染性个体引起的相对传播率。这将通过乘积 \(\mu \beta\) 来发挥作用。

- \(\theta\):城市间流动因子。这是一个大于 1 的因子,用于校正流动性数据的低报(以及 2018 年至 2020 年的人口增长)。

- \(Z\):平均潜伏期(即处于“暴露”状态的时间)。

- \(\alpha\):这是足够严重以至于(最终)被记录的感染的比例。

- \(D\):平均感染持续时间(即处于任一“传染性”状态的时间)。

我们将使用围绕状态的 EAKF 的迭代滤波循环来推断这些参数的点估计。

该模型还依赖于未推断的常数

- \(M\):城市间流动矩阵。这是随时间变化的,并且假定为给定的。回想一下,它按推断参数 \(\theta\) 进行缩放,以给出城市之间实际的人口流动。

- \(N\):每个城市的人口总数。初始人口被视为给定的,人口的时间变化是根据流动性数字 \(\theta M\) 计算的。

首先,我们为保存我们的状态和参数提供一些数据结构。

SEIRComponents = collections.namedtuple(

typename='SEIRComponents',

field_names=[

'susceptible', # S

'exposed', # E

'documented_infectious', # I^r

'undocumented_infectious', # I^u

# This is the count of new cases in the "documented infectious" compartment.

# We need this because we will introduce a reporting delay, between a person

# entering I^r and showing up in the observable case count data.

# This can't be computed from the cumulative `documented_infectious` count,

# because some portion of that population will move to the 'recovered'

# state, which we aren't tracking explicitly.

'daily_new_documented_infectious'])

ModelParams = collections.namedtuple(

typename='ModelParams',

field_names=[

'documented_infectious_tx_rate', # Beta

'undocumented_infectious_tx_relative_rate', # Mu

'intercity_underreporting_factor', # Theta

'average_latency_period', # Z

'fraction_of_documented_infections', # Alpha

'average_infection_duration' # D

]

)

我们还对 Li 等人对参数值范围的界限进行了编码。

PARAMETER_LOWER_BOUNDS = ModelParams(

documented_infectious_tx_rate=0.8,

undocumented_infectious_tx_relative_rate=0.2,

intercity_underreporting_factor=1.,

average_latency_period=2.,

fraction_of_documented_infections=0.02,

average_infection_duration=2.

)

PARAMETER_UPPER_BOUNDS = ModelParams(

documented_infectious_tx_rate=1.5,

undocumented_infectious_tx_relative_rate=1.,

intercity_underreporting_factor=1.75,

average_latency_period=5.,

fraction_of_documented_infections=1.,

average_infection_duration=5.

)

SEIR 动力学

在这里,我们定义了参数和状态之间的关系。

Li 等人(补充材料,方程 1-5)的时间动力学方程如下

\(\frac{dS_i}{dt} = -\beta \frac{S_i I_i^r}{N_i} - \mu \beta \frac{S_i I_i^u}{N_i} + \theta \sum_k \frac{M_{ij} S_j}{N_j - I_j^r} - + \theta \sum_k \frac{M_{ji} S_j}{N_i - I_i^r}\)

\(\frac{dE_i}{dt} = \beta \frac{S_i I_i^r}{N_i} + \mu \beta \frac{S_i I_i^u}{N_i} -\frac{E_i}{Z} + \theta \sum_k \frac{M_{ij} E_j}{N_j - I_j^r} - + \theta \sum_k \frac{M_{ji} E_j}{N_i - I_i^r}\)

\(\frac{dI^r_i}{dt} = \alpha \frac{E_i}{Z} - \frac{I_i^r}{D}\)

\(\frac{dI^u_i}{dt} = (1 - \alpha) \frac{E_i}{Z} - \frac{I_i^u}{D} + \theta \sum_k \frac{M_{ij} I_j^u}{N_j - I_j^r} - + \theta \sum_k \frac{M_{ji} I^u_j}{N_i - I_i^r}\)

\(N_i = N_i + \theta \sum_j M_{ij} - \theta \sum_j M_{ji}\)

提醒一下,\(i\) 和 \(j\) 下标索引城市。这些方程通过以下方式对疾病的时间演变进行建模

- 与传染性个体的接触导致更多感染;

- 疾病从“暴露”状态发展到“传染性”状态之一;

- 疾病从“传染性”状态发展到恢复,我们通过从建模人群中移除来对这种状态进行建模;

- 城市间流动,包括暴露或未记录的传染性人员;以及

- 通过城市间流动,每天城市人口的时间变化。

遵循 Li 等人,我们假设病例严重到足以最终被报告的人不会在城市之间旅行。

同样遵循 Li 等人,我们将这些动力学视为受逐项泊松噪声的影响,即每个项实际上是泊松的速率,从该泊松中抽取的样本给出真实的变化。泊松噪声是逐项的,因为减去(而不是加上)泊松样本不会产生泊松分布的结果。

我们将使用经典的四阶龙格-库塔积分器在时间上向前演化这些动力学,但首先让我们定义计算它们的函数(包括对泊松噪声进行采样)。

def sample_state_deltas(

state, population, mobility_matrix, params, seed, is_deterministic=False):

"""Computes one-step change in state, including Poisson sampling.

Note that this is coded to support vectorized evaluation on arbitrary-shape

batches of states. This is useful, for example, for running multiple

independent replicas of this model to compute credible intervals for the

parameters. We refer to the arbitrary batch shape with the conventional

`B` in the parameter documentation below. This function also, of course,

supports broadcasting over the batch shape.

Args:

state: A `SEIRComponents` tuple with fields Tensors of shape

B + [num_locations] giving the current disease state.

population: A Tensor of shape B + [num_locations] giving the current city

populations.

mobility_matrix: A Tensor of shape B + [num_locations, num_locations] giving

the current baseline inter-city mobility.

params: A `ModelParams` tuple with fields Tensors of shape B giving the

global parameters for the current EAKF run.

seed: Initial entropy for pseudo-random number generation. The Poisson

sampling is repeatable by supplying the same seed.

is_deterministic: A `bool` flag to turn off Poisson sampling if desired.

Returns:

delta: A `SEIRComponents` tuple with fields Tensors of shape

B + [num_locations] giving the one-day changes in the state, according

to equations 1-4 above (including Poisson noise per Li et al).

"""

undocumented_infectious_fraction = state.undocumented_infectious / population

documented_infectious_fraction = state.documented_infectious / population

# Anyone not documented as infectious is considered mobile

mobile_population = (population - state.documented_infectious)

def compute_outflow(compartment_population):

raw_mobility = tf.linalg.matvec(

mobility_matrix, compartment_population / mobile_population)

return params.intercity_underreporting_factor * raw_mobility

def compute_inflow(compartment_population):

raw_mobility = tf.linalg.matmul(

mobility_matrix,

(compartment_population / mobile_population)[..., tf.newaxis],

transpose_a=True)

return params.intercity_underreporting_factor * tf.squeeze(

raw_mobility, axis=-1)

# Helper for sampling the Poisson-variate terms.

seeds = samplers.split_seed(seed, n=11)

if is_deterministic:

def sample_poisson(rate):

return rate

else:

def sample_poisson(rate):

return tfd.Poisson(rate=rate).sample(seed=seeds.pop())

# Below are the various terms called U1-U12 in the paper. We combined the

# first two, which should be fine; both are poisson so their sum is too, and

# there's no risk (as there could be in other terms) of going negative.

susceptible_becoming_exposed = sample_poisson(

state.susceptible *

(params.documented_infectious_tx_rate *

documented_infectious_fraction +

(params.undocumented_infectious_tx_relative_rate *

params.documented_infectious_tx_rate) *

undocumented_infectious_fraction)) # U1 + U2

susceptible_population_inflow = sample_poisson(

compute_inflow(state.susceptible)) # U3

susceptible_population_outflow = sample_poisson(

compute_outflow(state.susceptible)) # U4

exposed_becoming_documented_infectious = sample_poisson(

params.fraction_of_documented_infections *

state.exposed / params.average_latency_period) # U5

exposed_becoming_undocumented_infectious = sample_poisson(

(1 - params.fraction_of_documented_infections) *

state.exposed / params.average_latency_period) # U6

exposed_population_inflow = sample_poisson(

compute_inflow(state.exposed)) # U7

exposed_population_outflow = sample_poisson(

compute_outflow(state.exposed)) # U8

documented_infectious_becoming_recovered = sample_poisson(

state.documented_infectious /

params.average_infection_duration) # U9

undocumented_infectious_becoming_recovered = sample_poisson(

state.undocumented_infectious /

params.average_infection_duration) # U10

undocumented_infectious_population_inflow = sample_poisson(

compute_inflow(state.undocumented_infectious)) # U11

undocumented_infectious_population_outflow = sample_poisson(

compute_outflow(state.undocumented_infectious)) # U12

# The final state_deltas

return SEIRComponents(

# Equation [1]

susceptible=(-susceptible_becoming_exposed +

susceptible_population_inflow +

-susceptible_population_outflow),

# Equation [2]

exposed=(susceptible_becoming_exposed +

-exposed_becoming_documented_infectious +

-exposed_becoming_undocumented_infectious +

exposed_population_inflow +

-exposed_population_outflow),

# Equation [3]

documented_infectious=(

exposed_becoming_documented_infectious +

-documented_infectious_becoming_recovered),

# Equation [4]

undocumented_infectious=(

exposed_becoming_undocumented_infectious +

-undocumented_infectious_becoming_recovered +

undocumented_infectious_population_inflow +

-undocumented_infectious_population_outflow),

# New to-be-documented infectious cases, subject to the delayed

# observation model.

daily_new_documented_infectious=exposed_becoming_documented_infectious)

这是积分器。这完全是标准的,除了将 PRNG 种子传递给 sample_state_deltas 函数以在龙格-库塔方法要求的每个部分步骤中获得独立的泊松噪声。

@tf.function(autograph=False)

def rk4_one_step(state, population, mobility_matrix, params, seed):

"""Implement one step of RK4, wrapped around a call to sample_state_deltas."""

# One seed for each RK sub-step

seeds = samplers.split_seed(seed, n=4)

deltas = tf.nest.map_structure(tf.zeros_like, state)

combined_deltas = tf.nest.map_structure(tf.zeros_like, state)

for a, b in zip([1., 2, 2, 1.], [6., 3., 3., 6.]):

next_input = tf.nest.map_structure(

lambda x, delta, a=a: x + delta / a, state, deltas)

deltas = sample_state_deltas(

next_input,

population,

mobility_matrix,

params,

seed=seeds.pop(), is_deterministic=False)

combined_deltas = tf.nest.map_structure(

lambda x, delta, b=b: x + delta / b, combined_deltas, deltas)

return tf.nest.map_structure(

lambda s, delta: s + tf.round(delta),

state, combined_deltas)

初始化

在这里,我们实现了论文中的初始化方案。

遵循 Li 等人,我们的推断方案将是一个集成调整的卡尔曼滤波器内循环,周围环绕着一个迭代滤波外循环 (IF-EAKF)。从计算角度来说,这意味着我们需要三种类型的初始化

- 内 EAKF 的初始状态

- 外 IF 的初始参数,这些参数也是第一个 EAKF 的初始参数

- 从一个 IF 迭代更新到下一个 IF 迭代的参数,这些参数用作除第一个 EAKF 之外的每个 EAKF 的初始参数。

def initialize_state(num_particles, num_batches, seed):

"""Initialize the state for a batch of EAKF runs.

Args:

num_particles: `int` giving the number of particles for the EAKF.

num_batches: `int` giving the number of independent EAKF runs to

initialize in a vectorized batch.

seed: PRNG entropy.

Returns:

state: A `SEIRComponents` tuple with Tensors of shape [num_particles,

num_batches, num_cities] giving the initial conditions in each

city, in each filter particle, in each batch member.

"""

num_cities = mobility_matrix_over_time.shape[-2]

state_shape = [num_particles, num_batches, num_cities]

susceptible = initial_population * np.ones(state_shape, dtype=np.float32)

documented_infectious = np.zeros(state_shape, dtype=np.float32)

daily_new_documented_infectious = np.zeros(state_shape, dtype=np.float32)

# Following Li et al, initialize Wuhan with up to 2000 people exposed

# and another up to 2000 undocumented infectious.

rng = np.random.RandomState(seed[0] % (2**31 - 1))

wuhan_exposed = rng.randint(

0, 2001, [num_particles, num_batches]).astype(np.float32)

wuhan_undocumented_infectious = rng.randint(

0, 2001, [num_particles, num_batches]).astype(np.float32)

# Also following Li et al, initialize cities adjacent to Wuhan with three

# days' worth of additional exposed and undocumented-infectious cases,

# as they may have traveled there before the beginning of the modeling

# period.

exposed = 3 * mobility_matrix_over_time[

WUHAN_IDX, :, 0] * wuhan_exposed[

..., np.newaxis] / initial_population[WUHAN_IDX]

undocumented_infectious = 3 * mobility_matrix_over_time[

WUHAN_IDX, :, 0] * wuhan_undocumented_infectious[

..., np.newaxis] / initial_population[WUHAN_IDX]

exposed[..., WUHAN_IDX] = wuhan_exposed

undocumented_infectious[..., WUHAN_IDX] = wuhan_undocumented_infectious

# Following Li et al, we do not remove the initial exposed and infectious

# persons from the susceptible population.

return SEIRComponents(

susceptible=tf.constant(susceptible),

exposed=tf.constant(exposed),

documented_infectious=tf.constant(documented_infectious),

undocumented_infectious=tf.constant(undocumented_infectious),

daily_new_documented_infectious=tf.constant(daily_new_documented_infectious))

def initialize_params(num_particles, num_batches, seed):

"""Initialize the global parameters for the entire inference run.

Args:

num_particles: `int` giving the number of particles for the EAKF.

num_batches: `int` giving the number of independent EAKF runs to

initialize in a vectorized batch.

seed: PRNG entropy.

Returns:

params: A `ModelParams` tuple with fields Tensors of shape

[num_particles, num_batches] giving the global parameters

to use for the first batch of EAKF runs.

"""

# We have 6 parameters. We'll initialize with a Sobol sequence,

# covering the hyper-rectangle defined by our parameter limits.

halton_sequence = tfp.mcmc.sample_halton_sequence(

dim=6, num_results=num_particles * num_batches, seed=seed)

halton_sequence = tf.reshape(

halton_sequence, [num_particles, num_batches, 6])

halton_sequences = tf.nest.pack_sequence_as(

PARAMETER_LOWER_BOUNDS, tf.split(

halton_sequence, num_or_size_splits=6, axis=-1))

def interpolate(minval, maxval, h):

return (maxval - minval) * h + minval

return tf.nest.map_structure(

interpolate,

PARAMETER_LOWER_BOUNDS, PARAMETER_UPPER_BOUNDS, halton_sequences)

def update_params(num_particles, num_batches,

prev_params, parameter_variance, seed):

"""Update the global parameters between EAKF runs.

Args:

num_particles: `int` giving the number of particles for the EAKF.

num_batches: `int` giving the number of independent EAKF runs to

initialize in a vectorized batch.

prev_params: A `ModelParams` tuple of the parameters used for the previous

EAKF run.

parameter_variance: A `ModelParams` tuple specifying how much to drift

each parameter.

seed: PRNG entropy.

Returns:

params: A `ModelParams` tuple with fields Tensors of shape

[num_particles, num_batches] giving the global parameters

to use for the next batch of EAKF runs.

"""

# Initialize near the previous set of parameters. This is the first step

# in Iterated Filtering.

seeds = tf.nest.pack_sequence_as(

prev_params, samplers.split_seed(seed, n=len(prev_params)))

return tf.nest.map_structure(

lambda x, v, seed: x + tf.math.sqrt(v) * tf.random.stateless_normal([

num_particles, num_batches, 1], seed=seed),

prev_params, parameter_variance, seeds)

延迟

该模型的一个重要特征是明确考虑了感染比开始时间晚报告的事实。也就是说,我们预计从 \(E\) 区室移动到 \(I^r\) 区室的人可能要到几天后才会出现在可观察的报告病例计数中。

我们假设延迟是伽马分布的。遵循 Li 等人,我们对形状使用 1.85,并将速率参数化为产生 9 天的平均报告延迟。

def raw_reporting_delay_distribution(gamma_shape=1.85, reporting_delay=9.):

return tfp.distributions.Gamma(

concentration=gamma_shape, rate=gamma_shape / reporting_delay)

我们的观察是离散的,因此我们将原始(连续)延迟向上舍入到最接近的一天。我们还有一个有限的数据范围,因此单个人的延迟分布是剩余天数上的一个类别。因此,我们可以通过预先计算多项式延迟概率来更有效地计算每个城市的预测观察结果,而不是对 \(O(I^r)\) 个伽马进行采样。

def reporting_delay_probs(num_timesteps, gamma_shape=1.85, reporting_delay=9.):

gamma_dist = raw_reporting_delay_distribution(gamma_shape, reporting_delay)

multinomial_probs = [gamma_dist.cdf(1.)]

for k in range(2, num_timesteps + 1):

multinomial_probs.append(gamma_dist.cdf(k) - gamma_dist.cdf(k - 1))

# For samples that are larger than T.

multinomial_probs.append(gamma_dist.survival_function(num_timesteps))

multinomial_probs = tf.stack(multinomial_probs)

return multinomial_probs

以下是将这些延迟实际应用于新的每日记录的传染性计数的代码

def delay_reporting(

daily_new_documented_infectious, num_timesteps, t, multinomial_probs, seed):

# This is the distribution of observed infectious counts from the current

# timestep.

raw_delays = tfd.Multinomial(

total_count=daily_new_documented_infectious,

probs=multinomial_probs).sample(seed=seed)

# The last bucket is used for samples that are out of range of T + 1. Thus

# they are not going to be observable in this model.

clipped_delays = raw_delays[..., :-1]

# We can also remove counts that are such that t + i >= T.

clipped_delays = clipped_delays[..., :num_timesteps - t]

# We finally shift everything by t. That means prepending with zeros.

return tf.concat([

tf.zeros(

tf.concat([

tf.shape(clipped_delays)[:-1], [t]], axis=0),

dtype=clipped_delays.dtype),

clipped_delays], axis=-1)

推断

首先,我们将为推断定义一些数据结构。

特别是,我们将要进行迭代滤波,它在进行推断时将状态和参数打包在一起。因此,我们将定义一个 ParameterStatePair 对象。

我们还希望将任何辅助信息打包到模型中。

ParameterStatePair = collections.namedtuple(

'ParameterStatePair', ['state', 'params'])

# Info that is tracked and mutated but should not have inference performed over.

SideInfo = collections.namedtuple(

'SideInfo', [

# Observations at every time step.

'observations_over_time',

'initial_population',

'mobility_matrix_over_time',

'population',

# Used for variance of measured observations.

'actual_reported_cases',

# Pre-computed buckets for the multinomial distribution.

'multinomial_probs',

'seed',

])

# Cities can not fall below this fraction of people

MINIMUM_CITY_FRACTION = 0.6

# How much to inflate the covariance by.

INFLATION_FACTOR = 1.1

INFLATE_FN = tfes.inflate_by_scaled_identity_fn(INFLATION_FACTOR)

以下是完整的观察模型,打包用于集成卡尔曼滤波器。

有趣的功能是报告延迟(如前所述计算)。上游模型为每个城市在每个时间步长发出 daily_new_documented_infectious。

# We observe the observed infections.

def observation_fn(t, state_params, extra):

"""Generate reported cases.

Args:

state_params: A `ParameterStatePair` giving the current parameters

and state.

t: Integer giving the current time.

extra: A `SideInfo` carrying auxiliary information.

Returns:

observations: A Tensor of predicted observables, namely new cases

per city at time `t`.

extra: Update `SideInfo`.

"""

# Undo padding introduced in `inference`.

daily_new_documented_infectious = state_params.state.daily_new_documented_infectious[..., 0]

# Number of people that we have already committed to become

# observed infectious over time.

# shape: batch + [num_particles, num_cities, time]

observations_over_time = extra.observations_over_time

num_timesteps = observations_over_time.shape[-1]

seed, new_seed = samplers.split_seed(extra.seed, salt='reporting delay')

daily_delayed_counts = delay_reporting(

daily_new_documented_infectious, num_timesteps, t,

extra.multinomial_probs, seed)

observations_over_time = observations_over_time + daily_delayed_counts

extra = extra._replace(

observations_over_time=observations_over_time,

seed=new_seed)

# Actual predicted new cases, re-padded.

adjusted_observations = observations_over_time[..., t][..., tf.newaxis]

# Finally observations have variance that is a function of the true observations:

return tfd.MultivariateNormalDiag(

loc=adjusted_observations,

scale_diag=tf.math.maximum(

2., extra.actual_reported_cases[..., t][..., tf.newaxis] / 2.)), extra

在这里,我们定义了转换动力学。我们已经完成了语义工作;在这里,我们只是将其打包到 EAKF 框架中,并且遵循 Li 等人,将城市人口剪裁以防止它们变得太小。

def transition_fn(t, state_params, extra):

"""SEIR dynamics.

Args:

state_params: A `ParameterStatePair` giving the current parameters

and state.

t: Integer giving the current time.

extra: A `SideInfo` carrying auxiliary information.

Returns:

state_params: A `ParameterStatePair` predicted for the next time step.

extra: Updated `SideInfo`.

"""

mobility_t = extra.mobility_matrix_over_time[..., t]

new_seed, rk4_seed = samplers.split_seed(extra.seed, salt='Transition')

new_state = rk4_one_step(

state_params.state,

extra.population,

mobility_t,

state_params.params,

seed=rk4_seed)

# Make sure population doesn't go below MINIMUM_CITY_FRACTION.

new_population = (

extra.population + state_params.params.intercity_underreporting_factor * (

# Inflow

tf.reduce_sum(mobility_t, axis=-2) -

# Outflow

tf.reduce_sum(mobility_t, axis=-1)))

new_population = tf.where(

new_population < MINIMUM_CITY_FRACTION * extra.initial_population,

extra.initial_population * MINIMUM_CITY_FRACTION,

new_population)

extra = extra._replace(population=new_population, seed=new_seed)

# The Ensemble Kalman Filter code expects the transition function to return a distribution.

# As the dynamics and noise are encapsulated above, we construct a `JointDistribution` that when

# sampled, returns the values above.

new_state = tfd.JointDistributionNamed(

model=tf.nest.map_structure(lambda x: tfd.VectorDeterministic(x), new_state))

params = tfd.JointDistributionNamed(

model=tf.nest.map_structure(lambda x: tfd.VectorDeterministic(x), state_params.params))

state_params = tfd.JointDistributionNamed(

model=ParameterStatePair(state=new_state, params=params))

return state_params, extra

最后,我们定义了推断方法。这是一个双循环,外循环是迭代滤波,而内循环是集成调整的卡尔曼滤波。

# Use tf.function to speed up EAKF prediction and updates.

ensemble_kalman_filter_predict = tf.function(

tfes.ensemble_kalman_filter_predict, autograph=False)

ensemble_adjustment_kalman_filter_update = tf.function(

tfes.ensemble_adjustment_kalman_filter_update, autograph=False)

def inference(

num_ensembles,

num_batches,

num_iterations,

actual_reported_cases,

mobility_matrix_over_time,

seed=None,

# This is how much to reduce the variance by in every iterative

# filtering step.

variance_shrinkage_factor=0.9,

# Days before infection is reported.

reporting_delay=9.,

# Shape parameter of Gamma distribution.

gamma_shape_parameter=1.85):

"""Inference for the Shaman, et al. model.

Args:

num_ensembles: Number of particles to use for EAKF.

num_batches: Number of batches of IF-EAKF to run.

num_iterations: Number of iterations to run iterative filtering.

actual_reported_cases: `Tensor` of shape `[L, T]` where `L` is the number

of cities, and `T` is the timesteps.

mobility_matrix_over_time: `Tensor` of shape `[L, L, T]` which specifies the

mobility between locations over time.

variance_shrinkage_factor: Python `float`. How much to reduce the

variance each iteration of iterated filtering.

reporting_delay: Python `float`. How many days before the infection

is reported.

gamma_shape_parameter: Python `float`. Shape parameter of Gamma distribution

of reporting delays.

Returns:

result: A `ModelParams` with fields Tensors of shape [num_batches],

containing the inferred parameters at the final iteration.

"""

print('Starting inference.')

num_timesteps = actual_reported_cases.shape[-1]

params_per_iter = []

multinomial_probs = reporting_delay_probs(

num_timesteps, gamma_shape_parameter, reporting_delay)

seed = samplers.sanitize_seed(seed, salt='Inference')

for i in range(num_iterations):

start_if_time = time.time()

seeds = samplers.split_seed(seed, n=4, salt='Initialize')

if params_per_iter:

parameter_variance = tf.nest.map_structure(

lambda minval, maxval: variance_shrinkage_factor ** (

2 * i) * (maxval - minval) ** 2 / 4.,

PARAMETER_LOWER_BOUNDS, PARAMETER_UPPER_BOUNDS)

params_t = update_params(

num_ensembles,

num_batches,

prev_params=params_per_iter[-1],

parameter_variance=parameter_variance,

seed=seeds.pop())

else:

params_t = initialize_params(num_ensembles, num_batches, seed=seeds.pop())

state_t = initialize_state(num_ensembles, num_batches, seed=seeds.pop())

population_t = sum(x for x in state_t)

observations_over_time = tf.zeros(

[num_ensembles,

num_batches,

actual_reported_cases.shape[0], num_timesteps])

extra = SideInfo(

observations_over_time=observations_over_time,

initial_population=tf.identity(population_t),

mobility_matrix_over_time=mobility_matrix_over_time,

population=population_t,

multinomial_probs=multinomial_probs,

actual_reported_cases=actual_reported_cases,

seed=seeds.pop())

# Clip states

state_t = clip_state(state_t, population_t)

params_t = clip_params(params_t, seed=seeds.pop())

# Accrue the parameter over time. We'll be averaging that

# and using that as our MLE estimate.

params_over_time = tf.nest.map_structure(

lambda x: tf.identity(x), params_t)

state_params = ParameterStatePair(state=state_t, params=params_t)

eakf_state = tfes.EnsembleKalmanFilterState(

step=tf.constant(0), particles=state_params, extra=extra)

for j in range(num_timesteps):

seeds = samplers.split_seed(eakf_state.extra.seed, n=3)

extra = extra._replace(seed=seeds.pop())

# Predict step.

# Inflate and clip.

new_particles = INFLATE_FN(eakf_state.particles)

state_t = clip_state(new_particles.state, eakf_state.extra.population)

params_t = clip_params(new_particles.params, seed=seeds.pop())

eakf_state = eakf_state._replace(

particles=ParameterStatePair(params=params_t, state=state_t))

eakf_predict_state = ensemble_kalman_filter_predict(eakf_state, transition_fn)

# Clip the state and particles.

state_params = eakf_predict_state.particles

state_t = clip_state(

state_params.state, eakf_predict_state.extra.population)

state_params = ParameterStatePair(state=state_t, params=state_params.params)

# We preprocess the state and parameters by affixing a 1 dimension. This is because for

# inference, we treat each city as independent. We could also introduce localization by

# considering cities that are adjacent.

state_params = tf.nest.map_structure(lambda x: x[..., tf.newaxis], state_params)

eakf_predict_state = eakf_predict_state._replace(particles=state_params)

# Update step.

eakf_update_state = ensemble_adjustment_kalman_filter_update(

eakf_predict_state,

actual_reported_cases[..., j][..., tf.newaxis],

observation_fn)

state_params = tf.nest.map_structure(

lambda x: x[..., 0], eakf_update_state.particles)

# Clip to ensure parameters / state are well constrained.

state_t = clip_state(

state_params.state, eakf_update_state.extra.population)

# Finally for the parameters, we should reduce over all updates. We get

# an extra dimension back so let's do that.

params_t = tf.nest.map_structure(

lambda x, y: x + tf.reduce_sum(y[..., tf.newaxis] - x, axis=-2, keepdims=True),

eakf_predict_state.particles.params, state_params.params)

params_t = clip_params(params_t, seed=seeds.pop())

params_t = tf.nest.map_structure(lambda x: x[..., 0], params_t)

state_params = ParameterStatePair(state=state_t, params=params_t)

eakf_state = eakf_update_state

eakf_state = eakf_state._replace(particles=state_params)

# Flatten and collect the inferred parameter at time step t.

params_over_time = tf.nest.map_structure(

lambda s, x: tf.concat([s, x], axis=-1), params_over_time, params_t)

est_params = tf.nest.map_structure(

# Take the average over the Ensemble and over time.

lambda x: tf.math.reduce_mean(x, axis=[0, -1])[..., tf.newaxis],

params_over_time)

params_per_iter.append(est_params)

print('Iterated Filtering {} / {} Ran in: {:.2f} seconds'.format(

i, num_iterations, time.time() - start_if_time))

return tf.nest.map_structure(

lambda x: tf.squeeze(x, axis=-1), params_per_iter[-1])

最后细节:剪裁参数和状态包括确保它们在范围内且非负。

def clip_state(state, population):

"""Clip state to sensible values."""

state = tf.nest.map_structure(

lambda x: tf.where(x < 0, 0., x), state)

# If S > population, then adjust as well.

susceptible = tf.where(state.susceptible > population, population, state.susceptible)

return SEIRComponents(

susceptible=susceptible,

exposed=state.exposed,

documented_infectious=state.documented_infectious,

undocumented_infectious=state.undocumented_infectious,

daily_new_documented_infectious=state.daily_new_documented_infectious)

def clip_params(params, seed):

"""Clip parameters to bounds."""

def _clip(p, minval, maxval):

return tf.where(

p < minval,

minval * (1. + 0.1 * tf.random.stateless_uniform(p.shape, seed=seed)),

tf.where(p > maxval,

maxval * (1. - 0.1 * tf.random.stateless_uniform(

p.shape, seed=seed)), p))

params = tf.nest.map_structure(

_clip, params, PARAMETER_LOWER_BOUNDS, PARAMETER_UPPER_BOUNDS)

return params

将所有内容整合在一起

# Let's sample the parameters.

#

# NOTE: Li et al. run inference 1000 times, which would take a few hours.

# Here we run inference 30 times (in a single, vectorized batch).

best_parameters = inference(

num_ensembles=300,

num_batches=30,

num_iterations=10,

actual_reported_cases=observed_daily_infectious_count,

mobility_matrix_over_time=mobility_matrix_over_time)

Starting inference. Iterated Filtering 0 / 10 Ran in: 26.65 seconds Iterated Filtering 1 / 10 Ran in: 28.69 seconds Iterated Filtering 2 / 10 Ran in: 28.06 seconds Iterated Filtering 3 / 10 Ran in: 28.48 seconds Iterated Filtering 4 / 10 Ran in: 28.57 seconds Iterated Filtering 5 / 10 Ran in: 28.35 seconds Iterated Filtering 6 / 10 Ran in: 28.35 seconds Iterated Filtering 7 / 10 Ran in: 28.19 seconds Iterated Filtering 8 / 10 Ran in: 28.58 seconds Iterated Filtering 9 / 10 Ran in: 28.23 seconds

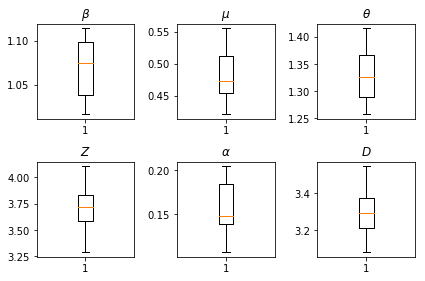

我们推断的结果。我们绘制了所有全局参数的最大似然值,以显示它们在我们 num_batches 次独立推断运行中的变化。这对应于补充材料中的表 S1。

fig, axs = plt.subplots(2, 3)

axs[0, 0].boxplot(best_parameters.documented_infectious_tx_rate,

whis=(2.5,97.5), sym='')

axs[0, 0].set_title(r'$\beta$')

axs[0, 1].boxplot(best_parameters.undocumented_infectious_tx_relative_rate,

whis=(2.5,97.5), sym='')

axs[0, 1].set_title(r'$\mu$')

axs[0, 2].boxplot(best_parameters.intercity_underreporting_factor,

whis=(2.5,97.5), sym='')

axs[0, 2].set_title(r'$\theta$')

axs[1, 0].boxplot(best_parameters.average_latency_period,

whis=(2.5,97.5), sym='')

axs[1, 0].set_title(r'$Z$')

axs[1, 1].boxplot(best_parameters.fraction_of_documented_infections,

whis=(2.5,97.5), sym='')

axs[1, 1].set_title(r'$\alpha$')

axs[1, 2].boxplot(best_parameters.average_infection_duration,

whis=(2.5,97.5), sym='')

axs[1, 2].set_title(r'$D$')

plt.tight_layout()