在 TensorFlow.org 上查看 在 TensorFlow.org 上查看

|

在 Google Colab 中运行 在 Google Colab 中运行

|

在 GitHub 上查看源代码 在 GitHub 上查看源代码

|

下载笔记本 下载笔记本

|

在这个 Colab 中,我们使用 TensorFlow 和 TensorFlow 概率探索高斯过程回归。我们从一些已知函数生成一些噪声观测值,并将 GP 模型拟合到这些数据。然后,我们从 GP 后验中采样,并在其域中的网格上绘制采样函数值。

背景

令 \(\mathcal{X}\) 为任意集合。高斯过程 (GP) 是由 \(\mathcal{X}\) 索引的随机变量的集合,使得如果 \(\{X_1, \ldots, X_n\} \subset \mathcal{X}\) 是任何有限子集,则边际密度 \(p(X_1 = x_1, \ldots, X_n = x_n)\) 是多元高斯分布。任何高斯分布都完全由其一阶和二阶中心矩(均值和协方差)指定,GP 也不例外。我们可以完全用其均值函数 \(\mu : \mathcal{X} \to \mathbb{R}\) 和协方差函数 \(k : \mathcal{X} \times \mathcal{X} \to \mathbb{R}\) 来指定 GP。由于各种原因,协方差函数也称为核函数。它只需要是对称的和正定的(参见 Rasmussen & Williams 的第 4 章)。下面我们使用指数二次协方差核。其形式为

\[ k(x, x') := \sigma^2 \exp \left( \frac{\|x - x'\|^2}{\lambda^2} \right) \]

其中 \(\sigma^2\) 称为“幅度”,\(\lambda\) 称为长度尺度。可以通过最大似然优化过程选择核参数。

从 GP 中的完整样本包括整个空间 \(\mathcal{X}\) 上的实值函数,实际上很难实现;通常选择一个点集来观察样本,并在这些点处绘制函数值。这是通过从适当的(有限维)多元高斯分布中采样来实现的。

请注意,根据上述定义,任何有限维多元高斯分布也是高斯过程。通常,当人们提到 GP 时,隐含地认为索引集是某个 \(\mathbb{R}^n\),我们在这里确实会做出这个假设。

高斯过程在机器学习中的一个常见应用是高斯过程回归。其思想是我们希望在有限数量的点 \(\{x_1, \ldots x_N\}\) 上估计一个未知函数,这些点具有该函数的噪声观测值 \(\{y_1, \ldots, y_N\}\)。我们想象一个生成过程

\[ \begin{align} f \sim \: & \textsf{GaussianProcess}\left( \text{mean_fn}=\mu(x), \text{covariance_fn}=k(x, x')\right) \\ y_i \sim \: & \textsf{Normal}\left( \text{loc}=f(x_i), \text{scale}=\sigma\right), i = 1, \ldots, N \end{align} \]

如上所述,采样函数不可能计算,因为我们需要它在无限多个点上的值。相反,人们考虑从多元高斯分布中进行有限采样。

\[ \begin{gather} \begin{bmatrix} f(x_1) \\ \vdots \\ f(x_N) \end{bmatrix} \sim \textsf{MultivariateNormal} \left( \: \text{loc}= \begin{bmatrix} \mu(x_1) \\ \vdots \\ \mu(x_N) \end{bmatrix} \:,\: \text{scale}= \begin{bmatrix} k(x_1, x_1) & \cdots & k(x_1, x_N) \\ \vdots & \ddots & \vdots \\ k(x_N, x_1) & \cdots & k(x_N, x_N) \\ \end{bmatrix}^{1/2} \: \right) \end{gather} \\ y_i \sim \textsf{Normal} \left( \text{loc}=f(x_i), \text{scale}=\sigma \right) \]

请注意协方差矩阵上的指数 \(\frac{1}{2}\):这表示 Cholesky 分解。计算 Cholesky 是必要的,因为 MVN 是一个位置-尺度族分布。不幸的是,Cholesky 分解的计算量很大,需要 \(O(N^3)\) 时间和 \(O(N^2)\) 空间。GP 文献的大部分内容都集中在处理这个看似无关紧要的小指数上。

通常将先验均值函数设为常数,通常为零。此外,一些符号约定也很方便。人们通常用 \(\mathbf{f}\) 表示有限的采样函数值向量。对于将 \(k\) 应用于输入对后得到的协方差矩阵,使用了一些有趣的符号。遵循 (Quiñonero-Candela, 2005),我们注意到矩阵的元素是特定输入点处函数值的协方差。因此,我们可以将协方差矩阵表示为 \(K_{AB}\),其中 \(A\) 和 \(B\) 是沿给定矩阵维度的函数值集合的一些指标。

例如,给定观察数据 \((\mathbf{x}, \mathbf{y})\),其中隐含着潜在函数值 \(\mathbf{f}\),我们可以写成

\[ K_{\mathbf{f},\mathbf{f} } = \begin{bmatrix} k(x_1, x_1) & \cdots & k(x_1, x_N) \\ \vdots & \ddots & \vdots \\ k(x_N, x_1) & \cdots & k(x_N, x_N) \\ \end{bmatrix} \]

类似地,我们可以混合输入集,例如

\[ K_{\mathbf{f},*} = \begin{bmatrix} k(x_1, x^*_1) & \cdots & k(x_1, x^*_T) \\ \vdots & \ddots & \vdots \\ k(x_N, x^*_1) & \cdots & k(x_N, x^*_T) \\ \end{bmatrix} \]

假设有 \(N\) 个训练输入和 \(T\) 个测试输入。上述生成过程可以简洁地写成

\[ \begin{align} \mathbf{f} \sim \: & \textsf{MultivariateNormal} \left( \text{loc}=\mathbf{0}, \text{scale}=K_{\mathbf{f},\mathbf{f} }^{1/2} \right) \\ y_i \sim \: & \textsf{Normal} \left( \text{loc}=f_i, \text{scale}=\sigma \right), i = 1, \ldots, N \end{align} \]

第一行中的采样操作从多元高斯分布中生成一个有限的 \(N\) 个函数值集——*与上述 GP 绘制符号不同,它不是整个函数*。第二行描述了从以各种函数值为中心的*单变量*高斯分布中进行的 \(N\) 次抽取,具有固定的观测噪声 \(\sigma^2\)。

有了上述生成模型,我们可以继续考虑后验推理问题。这将产生一个后验分布,该分布是在上述过程中观察到的噪声数据条件下,在新的测试点集上的函数值。

有了上述符号,我们可以简洁地写出未来(噪声)观测的后验预测分布,该分布以相应的输入和训练数据为条件(有关更多详细信息,请参见 Rasmussen & Williams 的第 2.2 节)。

\[ \mathbf{y}^* \mid \mathbf{x}^*, \mathbf{x}, \mathbf{y} \sim \textsf{Normal} \left( \text{loc}=\mathbf{\mu}^*, \text{scale}=(\Sigma^*)^{1/2} \right), \]

其中

\[ \mathbf{\mu}^* = K_{*,\mathbf{f} }\left(K_{\mathbf{f},\mathbf{f} } + \sigma^2 I \right)^{-1} \mathbf{y} \]

以及

\[ \Sigma^* = K_{*,*} - K_{*,\mathbf{f} } \left(K_{\mathbf{f},\mathbf{f} } + \sigma^2 I \right)^{-1} K_{\mathbf{f},*} \]

导入

import time

import numpy as np

import matplotlib.pyplot as plt

import tensorflow as tf

import tf_keras

import tensorflow_probability as tfp

tfb = tfp.bijectors

tfd = tfp.distributions

tfk = tfp.math.psd_kernels

from mpl_toolkits.mplot3d import Axes3D

%pylab inline

# Configure plot defaults

plt.rcParams['axes.facecolor'] = 'white'

plt.rcParams['grid.color'] = '#666666'

%config InlineBackend.figure_format = 'png'

Populating the interactive namespace from numpy and matplotlib

示例:对噪声正弦数据的精确 GP 回归

在这里,我们从噪声正弦曲线生成训练数据,然后从 GP 回归模型的后验中采样大量曲线。我们使用 Adam 来优化内核超参数(我们最小化数据在先验下的负对数似然)。我们绘制训练曲线,然后绘制真实函数和后验样本。

def sinusoid(x):

return np.sin(3 * np.pi * x[..., 0])

def generate_1d_data(num_training_points, observation_noise_variance):

"""Generate noisy sinusoidal observations at a random set of points.

Returns:

observation_index_points, observations

"""

index_points_ = np.random.uniform(-1., 1., (num_training_points, 1))

index_points_ = index_points_.astype(np.float64)

# y = f(x) + noise

observations_ = (sinusoid(index_points_) +

np.random.normal(loc=0,

scale=np.sqrt(observation_noise_variance),

size=(num_training_points)))

return index_points_, observations_

# Generate training data with a known noise level (we'll later try to recover

# this value from the data).

NUM_TRAINING_POINTS = 100

observation_index_points_, observations_ = generate_1d_data(

num_training_points=NUM_TRAINING_POINTS,

observation_noise_variance=.1)

我们将对内核超参数进行先验,并使用 tfd.JointDistributionNamed 写出超参数和观察数据的联合分布。

def build_gp(amplitude, length_scale, observation_noise_variance):

"""Defines the conditional dist. of GP outputs, given kernel parameters."""

# Create the covariance kernel, which will be shared between the prior (which we

# use for maximum likelihood training) and the posterior (which we use for

# posterior predictive sampling)

kernel = tfk.ExponentiatedQuadratic(amplitude, length_scale)

# Create the GP prior distribution, which we will use to train the model

# parameters.

return tfd.GaussianProcess(

kernel=kernel,

index_points=observation_index_points_,

observation_noise_variance=observation_noise_variance)

gp_joint_model = tfd.JointDistributionNamed({

'amplitude': tfd.LogNormal(loc=0., scale=np.float64(1.)),

'length_scale': tfd.LogNormal(loc=0., scale=np.float64(1.)),

'observation_noise_variance': tfd.LogNormal(loc=0., scale=np.float64(1.)),

'observations': build_gp,

})

我们可以通过验证我们是否可以从先验中采样以及计算样本的对数密度来检查我们的实现。

x = gp_joint_model.sample()

lp = gp_joint_model.log_prob(x)

print("sampled {}".format(x))

print("log_prob of sample: {}".format(lp))

sampled {'observation_noise_variance': <tf.Tensor: shape=(), dtype=float64, numpy=2.067952217184325>, 'length_scale': <tf.Tensor: shape=(), dtype=float64, numpy=1.154435715487831>, 'amplitude': <tf.Tensor: shape=(), dtype=float64, numpy=5.383850737703549>, 'observations': <tf.Tensor: shape=(100,), dtype=float64, numpy=

array([-2.37070577, -2.05363838, -0.95152824, 3.73509388, -0.2912646 ,

0.46112342, -1.98018513, -2.10295857, -1.33589756, -2.23027226,

-2.25081374, -0.89450835, -2.54196452, 1.46621647, 2.32016193,

5.82399989, 2.27241034, -0.67523432, -1.89150197, -1.39834474,

-2.33954116, 0.7785609 , -1.42763627, -0.57389025, -0.18226098,

-3.45098732, 0.27986652, -3.64532398, -1.28635204, -2.42362875,

0.01107288, -2.53222176, -2.0886136 , -5.54047694, -2.18389607,

-1.11665628, -3.07161217, -2.06070336, -0.84464262, 1.29238438,

-0.64973999, -2.63805504, -3.93317576, 0.65546645, 2.24721181,

-0.73403676, 5.31628298, -1.2208384 , 4.77782252, -1.42978168,

-3.3089274 , 3.25370494, 3.02117591, -1.54862932, -1.07360811,

1.2004856 , -4.3017773 , -4.95787789, -1.95245901, -2.15960839,

-3.78592731, -1.74096185, 3.54891595, 0.56294143, 1.15288455,

-0.77323696, 2.34430694, -1.05302007, -0.7514684 , -0.98321063,

-3.01300144, -3.00033274, 0.44200837, 0.45060886, -1.84497318,

-1.89616746, -2.15647664, -2.65672581, -3.65493379, 1.70923375,

-3.88695218, -0.05151283, 4.51906677, -2.28117003, 3.03032793,

-1.47713194, -0.35625273, 3.73501587, -2.09328047, -0.60665614,

-0.78177188, -0.67298545, 2.97436033, -0.29407932, 2.98482427,

-1.54951178, 2.79206821, 4.2225733 , 2.56265198, 2.80373284])>}

log_prob of sample: -194.96442183797524

现在让我们优化以找到具有最高后验概率的参数值。我们将为每个参数定义一个变量,并将它们的值限制为正数。

# Create the trainable model parameters, which we'll subsequently optimize.

# Note that we constrain them to be strictly positive.

constrain_positive = tfb.Shift(np.finfo(np.float64).tiny)(tfb.Exp())

amplitude_var = tfp.util.TransformedVariable(

initial_value=1.,

bijector=constrain_positive,

name='amplitude',

dtype=np.float64)

length_scale_var = tfp.util.TransformedVariable(

initial_value=1.,

bijector=constrain_positive,

name='length_scale',

dtype=np.float64)

observation_noise_variance_var = tfp.util.TransformedVariable(

initial_value=1.,

bijector=constrain_positive,

name='observation_noise_variance_var',

dtype=np.float64)

trainable_variables = [v.trainable_variables[0] for v in

[amplitude_var,

length_scale_var,

observation_noise_variance_var]]

为了使模型适应我们的观察数据,我们将定义一个 target_log_prob 函数,该函数接受(尚未推断的)内核超参数。

def target_log_prob(amplitude, length_scale, observation_noise_variance):

return gp_joint_model.log_prob({

'amplitude': amplitude,

'length_scale': length_scale,

'observation_noise_variance': observation_noise_variance,

'observations': observations_

})

# Now we optimize the model parameters.

num_iters = 1000

optimizer = tf_keras.optimizers.Adam(learning_rate=.01)

# Use `tf.function` to trace the loss for more efficient evaluation.

@tf.function(autograph=False, jit_compile=False)

def train_model():

with tf.GradientTape() as tape:

loss = -target_log_prob(amplitude_var, length_scale_var,

observation_noise_variance_var)

grads = tape.gradient(loss, trainable_variables)

optimizer.apply_gradients(zip(grads, trainable_variables))

return loss

# Store the likelihood values during training, so we can plot the progress

lls_ = np.zeros(num_iters, np.float64)

for i in range(num_iters):

loss = train_model()

lls_[i] = loss

print('Trained parameters:')

print('amplitude: {}'.format(amplitude_var._value().numpy()))

print('length_scale: {}'.format(length_scale_var._value().numpy()))

print('observation_noise_variance: {}'.format(observation_noise_variance_var._value().numpy()))

Trained parameters: amplitude: 0.9176153445125278 length_scale: 0.18444082442910079 observation_noise_variance: 0.0880273312850989

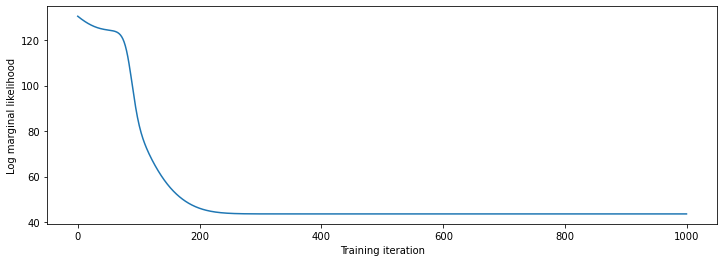

# Plot the loss evolution

plt.figure(figsize=(12, 4))

plt.plot(lls_)

plt.xlabel("Training iteration")

plt.ylabel("Log marginal likelihood")

plt.show()

# Having trained the model, we'd like to sample from the posterior conditioned

# on observations. We'd like the samples to be at points other than the training

# inputs.

predictive_index_points_ = np.linspace(-1.2, 1.2, 200, dtype=np.float64)

# Reshape to [200, 1] -- 1 is the dimensionality of the feature space.

predictive_index_points_ = predictive_index_points_[..., np.newaxis]

optimized_kernel = tfk.ExponentiatedQuadratic(amplitude_var, length_scale_var)

gprm = tfd.GaussianProcessRegressionModel(

kernel=optimized_kernel,

index_points=predictive_index_points_,

observation_index_points=observation_index_points_,

observations=observations_,

observation_noise_variance=observation_noise_variance_var,

predictive_noise_variance=0.)

# Create op to draw 50 independent samples, each of which is a *joint* draw

# from the posterior at the predictive_index_points_. Since we have 200 input

# locations as defined above, this posterior distribution over corresponding

# function values is a 200-dimensional multivariate Gaussian distribution!

num_samples = 50

samples = gprm.sample(num_samples)

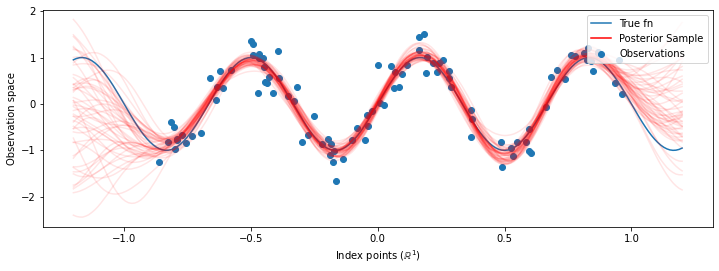

# Plot the true function, observations, and posterior samples.

plt.figure(figsize=(12, 4))

plt.plot(predictive_index_points_, sinusoid(predictive_index_points_),

label='True fn')

plt.scatter(observation_index_points_[:, 0], observations_,

label='Observations')

for i in range(num_samples):

plt.plot(predictive_index_points_, samples[i, :], c='r', alpha=.1,

label='Posterior Sample' if i == 0 else None)

leg = plt.legend(loc='upper right')

for lh in leg.legendHandles:

lh.set_alpha(1)

plt.xlabel(r"Index points ($\mathbb{R}^1$)")

plt.ylabel("Observation space")

plt.show()

用 HMC 边缘化超参数

与其优化超参数,不如尝试使用哈密顿蒙特卡罗方法将它们积分出去。我们将首先定义并运行一个采样器,以近似地从给定观测值的内核超参数的后验分布中抽取样本。

num_results = 100

num_burnin_steps = 50

sampler = tfp.mcmc.TransformedTransitionKernel(

tfp.mcmc.NoUTurnSampler(

target_log_prob_fn=target_log_prob,

step_size=tf.cast(0.1, tf.float64)),

bijector=[constrain_positive, constrain_positive, constrain_positive])

adaptive_sampler = tfp.mcmc.DualAveragingStepSizeAdaptation(

inner_kernel=sampler,

num_adaptation_steps=int(0.8 * num_burnin_steps),

target_accept_prob=tf.cast(0.75, tf.float64))

initial_state = [tf.cast(x, tf.float64) for x in [1., 1., 1.]]

# Speed up sampling by tracing with `tf.function`.

@tf.function(autograph=False, jit_compile=False)

def do_sampling():

return tfp.mcmc.sample_chain(

kernel=adaptive_sampler,

current_state=initial_state,

num_results=num_results,

num_burnin_steps=num_burnin_steps,

trace_fn=lambda current_state, kernel_results: kernel_results)

t0 = time.time()

samples, kernel_results = do_sampling()

t1 = time.time()

print("Inference ran in {:.2f}s.".format(t1-t0))

Inference ran in 9.00s.

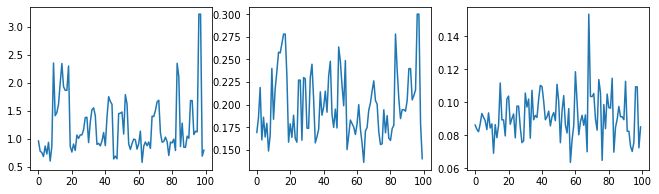

让我们通过检查超参数轨迹来检查采样器。

(amplitude_samples,

length_scale_samples,

observation_noise_variance_samples) = samples

f = plt.figure(figsize=[15, 3])

for i, s in enumerate(samples):

ax = f.add_subplot(1, len(samples) + 1, i + 1)

ax.plot(s)

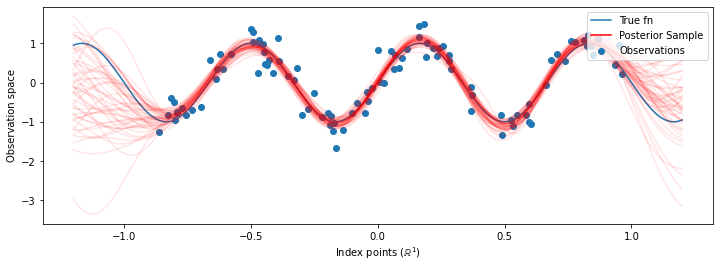

现在,与其构建一个具有优化超参数的单个 GP,不如将*后验预测分布*构建为 GP 的混合,每个 GP 都由从超参数的后验分布中抽取的样本定义。这通过蒙特卡罗采样近似地对后验参数进行积分,以计算未观察位置的边际预测分布。

# The sampled hyperparams have a leading batch dimension, `[num_results, ...]`,

# so they construct a *batch* of kernels.

batch_of_posterior_kernels = tfk.ExponentiatedQuadratic(

amplitude_samples, length_scale_samples)

# The batch of kernels creates a batch of GP predictive models, one for each

# posterior sample.

batch_gprm = tfd.GaussianProcessRegressionModel(

kernel=batch_of_posterior_kernels,

index_points=predictive_index_points_,

observation_index_points=observation_index_points_,

observations=observations_,

observation_noise_variance=observation_noise_variance_samples,

predictive_noise_variance=0.)

# To construct the marginal predictive distribution, we average with uniform

# weight over the posterior samples.

predictive_gprm = tfd.MixtureSameFamily(

mixture_distribution=tfd.Categorical(logits=tf.zeros([num_results])),

components_distribution=batch_gprm)

num_samples = 50

samples = predictive_gprm.sample(num_samples)

# Plot the true function, observations, and posterior samples.

plt.figure(figsize=(12, 4))

plt.plot(predictive_index_points_, sinusoid(predictive_index_points_),

label='True fn')

plt.scatter(observation_index_points_[:, 0], observations_,

label='Observations')

for i in range(num_samples):

plt.plot(predictive_index_points_, samples[i, :], c='r', alpha=.1,

label='Posterior Sample' if i == 0 else None)

leg = plt.legend(loc='upper right')

for lh in leg.legendHandles:

lh.set_alpha(1)

plt.xlabel(r"Index points ($\mathbb{R}^1$)")

plt.ylabel("Observation space")

plt.show()

虽然在这种情况下差异很细微,但总的来说,我们期望后验预测分布比仅使用我们上面所做的最可能参数具有更好的泛化能力(对保留数据给出更高的似然)。