在 TensorFlow.org 上查看 在 TensorFlow.org 上查看

|

在 Google Colab 中运行 在 Google Colab 中运行

|

在 GitHub 上查看 在 GitHub 上查看

|

下载笔记本 下载笔记本

|

查看 TF Hub 模型 查看 TF Hub 模型

|

此笔记本使用电影评论的文本将电影评论分类为正面或负面。这是一个二元(或两类)分类的示例,这是一种重要且应用广泛的机器学习问题。

我们将使用IMDB 数据集,其中包含来自互联网电影数据库的 50,000 条电影评论的文本。这些评论分为 25,000 条用于训练,25,000 条用于测试。训练集和测试集是平衡的,这意味着它们包含相同数量的正面和负面评论。

此笔记本使用tf.keras(一个用于在 TensorFlow 中构建和训练模型的高级 API)和TensorFlow Hub(一个用于迁移学习的库和平台)。有关使用tf.keras进行更高级的文本分类教程,请参阅MLCC 文本分类指南。

更多模型

此处您可以找到更多表达能力强或性能更高的模型,您可以使用这些模型来生成文本嵌入。

设置

import numpy as np

import tensorflow as tf

import tensorflow_hub as hub

import tensorflow_datasets as tfds

import matplotlib.pyplot as plt

print("Version: ", tf.__version__)

print("Eager mode: ", tf.executing_eagerly())

print("Hub version: ", hub.__version__)

print("GPU is", "available" if tf.config.list_physical_devices('GPU') else "NOT AVAILABLE")

2023-12-08 12:29:56.398917: E external/local_xla/xla/stream_executor/cuda/cuda_dnn.cc:9261] Unable to register cuDNN factory: Attempting to register factory for plugin cuDNN when one has already been registered 2023-12-08 12:29:56.398969: E external/local_xla/xla/stream_executor/cuda/cuda_fft.cc:607] Unable to register cuFFT factory: Attempting to register factory for plugin cuFFT when one has already been registered 2023-12-08 12:29:56.400437: E external/local_xla/xla/stream_executor/cuda/cuda_blas.cc:1515] Unable to register cuBLAS factory: Attempting to register factory for plugin cuBLAS when one has already been registered Version: 2.15.0 Eager mode: True Hub version: 0.15.0 GPU is NOT AVAILABLE 2023-12-08 12:29:59.897456: E external/local_xla/xla/stream_executor/cuda/cuda_driver.cc:274] failed call to cuInit: CUDA_ERROR_NO_DEVICE: no CUDA-capable device is detected

下载 IMDB 数据集

IMDB 数据集可在TensorFlow 数据集上获得。以下代码将 IMDB 数据集下载到您的机器(或 Colab 运行时)

train_data, test_data = tfds.load(name="imdb_reviews", split=["train", "test"],

batch_size=-1, as_supervised=True)

train_examples, train_labels = tfds.as_numpy(train_data)

test_examples, test_labels = tfds.as_numpy(test_data)

探索数据

让我们花点时间了解数据的格式。每个示例都是代表电影评论的句子和相应的标签。句子未经过任何预处理。标签是 0 或 1 的整数值,其中 0 是负面评论,1 是正面评论。

print("Training entries: {}, test entries: {}".format(len(train_examples), len(test_examples)))

Training entries: 25000, test entries: 25000

让我们打印前 10 个示例。

train_examples[:10]

array([b"This was an absolutely terrible movie. Don't be lured in by Christopher Walken or Michael Ironside. Both are great actors, but this must simply be their worst role in history. Even their great acting could not redeem this movie's ridiculous storyline. This movie is an early nineties US propaganda piece. The most pathetic scenes were those when the Columbian rebels were making their cases for revolutions. Maria Conchita Alonso appeared phony, and her pseudo-love affair with Walken was nothing but a pathetic emotional plug in a movie that was devoid of any real meaning. I am disappointed that there are movies like this, ruining actor's like Christopher Walken's good name. I could barely sit through it.",

b'I have been known to fall asleep during films, but this is usually due to a combination of things including, really tired, being warm and comfortable on the sette and having just eaten a lot. However on this occasion I fell asleep because the film was rubbish. The plot development was constant. Constantly slow and boring. Things seemed to happen, but with no explanation of what was causing them or why. I admit, I may have missed part of the film, but i watched the majority of it and everything just seemed to happen of its own accord without any real concern for anything else. I cant recommend this film at all.',

b'Mann photographs the Alberta Rocky Mountains in a superb fashion, and Jimmy Stewart and Walter Brennan give enjoyable performances as they always seem to do. <br /><br />But come on Hollywood - a Mountie telling the people of Dawson City, Yukon to elect themselves a marshal (yes a marshal!) and to enforce the law themselves, then gunfighters battling it out on the streets for control of the town? <br /><br />Nothing even remotely resembling that happened on the Canadian side of the border during the Klondike gold rush. Mr. Mann and company appear to have mistaken Dawson City for Deadwood, the Canadian North for the American Wild West.<br /><br />Canadian viewers be prepared for a Reefer Madness type of enjoyable howl with this ludicrous plot, or, to shake your head in disgust.',

b'This is the kind of film for a snowy Sunday afternoon when the rest of the world can go ahead with its own business as you descend into a big arm-chair and mellow for a couple of hours. Wonderful performances from Cher and Nicolas Cage (as always) gently row the plot along. There are no rapids to cross, no dangerous waters, just a warm and witty paddle through New York life at its best. A family film in every sense and one that deserves the praise it received.',

b'As others have mentioned, all the women that go nude in this film are mostly absolutely gorgeous. The plot very ably shows the hypocrisy of the female libido. When men are around they want to be pursued, but when no "men" are around, they become the pursuers of a 14 year old boy. And the boy becomes a man really fast (we should all be so lucky at this age!). He then gets up the courage to pursue his true love.',

b"This is a film which should be seen by anybody interested in, effected by, or suffering from an eating disorder. It is an amazingly accurate and sensitive portrayal of bulimia in a teenage girl, its causes and its symptoms. The girl is played by one of the most brilliant young actresses working in cinema today, Alison Lohman, who was later so spectacular in 'Where the Truth Lies'. I would recommend that this film be shown in all schools, as you will never see a better on this subject. Alison Lohman is absolutely outstanding, and one marvels at her ability to convey the anguish of a girl suffering from this compulsive disorder. If barometers tell us the air pressure, Alison Lohman tells us the emotional pressure with the same degree of accuracy. Her emotional range is so precise, each scene could be measured microscopically for its gradations of trauma, on a scale of rising hysteria and desperation which reaches unbearable intensity. Mare Winningham is the perfect choice to play her mother, and does so with immense sympathy and a range of emotions just as finely tuned as Lohman's. Together, they make a pair of sensitive emotional oscillators vibrating in resonance with one another. This film is really an astonishing achievement, and director Katt Shea should be proud of it. The only reason for not seeing it is if you are not interested in people. But even if you like nature films best, this is after all animal behaviour at the sharp edge. Bulimia is an extreme version of how a tormented soul can destroy her own body in a frenzy of despair. And if we don't sympathise with people suffering from the depths of despair, then we are dead inside.",

b'Okay, you have:<br /><br />Penelope Keith as Miss Herringbone-Tweed, B.B.E. (Backbone of England.) She\'s killed off in the first scene - that\'s right, folks; this show has no backbone!<br /><br />Peter O\'Toole as Ol\' Colonel Cricket from The First War and now the emblazered Lord of the Manor.<br /><br />Joanna Lumley as the ensweatered Lady of the Manor, 20 years younger than the colonel and 20 years past her own prime but still glamourous (Brit spelling, not mine) enough to have a toy-boy on the side. It\'s alright, they have Col. Cricket\'s full knowledge and consent (they guy even comes \'round for Christmas!) Still, she\'s considerate of the colonel enough to have said toy-boy her own age (what a gal!)<br /><br />David McCallum as said toy-boy, equally as pointlessly glamourous as his squeeze. Pilcher couldn\'t come up with any cover for him within the story, so she gave him a hush-hush job at the Circus.<br /><br />and finally:<br /><br />Susan Hampshire as Miss Polonia Teacups, Venerable Headmistress of the Venerable Girls\' Boarding-School, serving tea in her office with a dash of deep, poignant advice for life in the outside world just before graduation. Her best bit of advice: "I\'ve only been to Nancherrow (the local Stately Home of England) once. I thought it was very beautiful but, somehow, not part of the real world." Well, we can\'t say they didn\'t warn us.<br /><br />Ah, Susan - time was, your character would have been running the whole show. They don\'t write \'em like that any more. Our loss, not yours.<br /><br />So - with a cast and setting like this, you have the re-makings of "Brideshead Revisited," right?<br /><br />Wrong! They took these 1-dimensional supporting roles because they paid so well. After all, acting is one of the oldest temp-jobs there is (YOU name another!)<br /><br />First warning sign: lots and lots of backlighting. They get around it by shooting outdoors - "hey, it\'s just the sunlight!"<br /><br />Second warning sign: Leading Lady cries a lot. When not crying, her eyes are moist. That\'s the law of romance novels: Leading Lady is "dewy-eyed."<br /><br />Henceforth, Leading Lady shall be known as L.L.<br /><br />Third warning sign: L.L. actually has stars in her eyes when she\'s in love. Still, I\'ll give Emily Mortimer an award just for having to act with that spotlight in her eyes (I wonder . did they use contacts?)<br /><br />And lastly, fourth warning sign: no on-screen female character is "Mrs." She\'s either "Miss" or "Lady."<br /><br />When all was said and done, I still couldn\'t tell you who was pursuing whom and why. I couldn\'t even tell you what was said and done.<br /><br />To sum up: they all live through World War II without anything happening to them at all.<br /><br />OK, at the end, L.L. finds she\'s lost her parents to the Japanese prison camps and baby sis comes home catatonic. Meanwhile (there\'s always a "meanwhile,") some young guy L.L. had a crush on (when, I don\'t know) comes home from some wartime tough spot and is found living on the street by Lady of the Manor (must be some street if SHE\'s going to find him there.) Both war casualties are whisked away to recover at Nancherrow (SOMEBODY has to be "whisked away" SOMEWHERE in these romance stories!)<br /><br />Great drama.',

b'The film is based on a genuine 1950s novel.<br /><br />Journalist Colin McInnes wrote a set of three "London novels": "Absolute Beginners", "City of Spades" and "Mr Love and Justice". I have read all three. The first two are excellent. The last, perhaps an experiment that did not come off. But McInnes\'s work is highly acclaimed; and rightly so. This musical is the novelist\'s ultimate nightmare - to see the fruits of one\'s mind being turned into a glitzy, badly-acted, soporific one-dimensional apology of a film that says it captures the spirit of 1950s London, and does nothing of the sort.<br /><br />Thank goodness Colin McInnes wasn\'t alive to witness it.',

b'I really love the sexy action and sci-fi films of the sixties and its because of the actress\'s that appeared in them. They found the sexiest women to be in these films and it didn\'t matter if they could act (Remember "Candy"?). The reason I was disappointed by this film was because it wasn\'t nostalgic enough. The story here has a European sci-fi film called "Dragonfly" being made and the director is fired. So the producers decide to let a young aspiring filmmaker (Jeremy Davies) to complete the picture. They\'re is one real beautiful woman in the film who plays Dragonfly but she\'s barely in it. Film is written and directed by Roman Coppola who uses some of his fathers exploits from his early days and puts it into the script. I wish the film could have been an homage to those early films. They could have lots of cameos by actors who appeared in them. There is one actor in this film who was popular from the sixties and its John Phillip Law (Barbarella). Gerard Depardieu, Giancarlo Giannini and Dean Stockwell appear as well. I guess I\'m going to have to continue waiting for a director to make a good homage to the films of the sixties. If any are reading this, "Make it as sexy as you can"! I\'ll be waiting!',

b'Sure, this one isn\'t really a blockbuster, nor does it target such a position. "Dieter" is the first name of a quite popular German musician, who is either loved or hated for his kind of acting and thats exactly what this movie is about. It is based on the autobiography "Dieter Bohlen" wrote a few years ago but isn\'t meant to be accurate on that. The movie is filled with some sexual offensive content (at least for American standard) which is either amusing (not for the other "actors" of course) or dumb - it depends on your individual kind of humor or on you being a "Bohlen"-Fan or not. Technically speaking there isn\'t much to criticize. Speaking of me I find this movie to be an OK-movie.'],

dtype=object)

让我们也打印前 10 个标签。

train_labels[:10]

array([0, 0, 0, 1, 1, 1, 0, 0, 0, 0])

构建模型

神经网络是通过堆叠层创建的——这需要三个主要的架构决策

- 如何表示文本?

- 模型中要使用多少层?

- 每层要使用多少个隐藏单元?

在本例中,输入数据由句子组成。要预测的标签是 0 或 1。

表示文本的一种方法是将句子转换为嵌入向量。我们可以使用预训练的文本嵌入作为第一层,这将有两个优点

- 我们不必担心文本预处理,

- 我们可以从迁移学习中获益。

在本例中,我们将使用来自TensorFlow Hub的模型,称为google/nnlm-en-dim50/2。

为了本教程的目的,还有另外两个模型可以测试

- google/nnlm-en-dim50-with-normalization/2 - 与google/nnlm-en-dim50/2相同,但添加了额外的文本规范化以删除标点符号。这有助于更好地覆盖输入文本上标记的词汇内嵌入。

- google/nnlm-en-dim128-with-normalization/2 - 一个更大的模型,嵌入维度为 128,而不是较小的 50。

让我们首先创建一个使用 TensorFlow Hub 模型来嵌入句子的 Keras 层,并在几个输入示例上试用它。请注意,生成的嵌入的输出形状是预期的:(num_examples, embedding_dimension)。

model = "https://tfhub.dev/google/nnlm-en-dim50/2"

hub_layer = hub.KerasLayer(model, input_shape=[], dtype=tf.string, trainable=True)

hub_layer(train_examples[:3])

<tf.Tensor: shape=(3, 50), dtype=float32, numpy=

array([[ 0.5423195 , -0.0119017 , 0.06337538, 0.06862972, -0.16776837,

-0.10581174, 0.16865303, -0.04998824, -0.31148055, 0.07910346,

0.15442263, 0.01488662, 0.03930153, 0.19772711, -0.12215476,

-0.04120981, -0.2704109 , -0.21922152, 0.26517662, -0.80739075,

0.25833532, -0.3100421 , 0.28683215, 0.1943387 , -0.29036492,

0.03862849, -0.7844411 , -0.0479324 , 0.4110299 , -0.36388892,

-0.58034706, 0.30269456, 0.3630897 , -0.15227164, -0.44391504,

0.19462997, 0.19528408, 0.05666234, 0.2890704 , -0.28468323,

-0.00531206, 0.0571938 , -0.3201318 , -0.04418665, -0.08550783,

-0.55847436, -0.23336391, -0.20782952, -0.03543064, -0.17533456],

[ 0.56338924, -0.12339553, -0.10862679, 0.7753425 , -0.07667089,

-0.15752277, 0.01872335, -0.08169781, -0.3521876 , 0.4637341 ,

-0.08492756, 0.07166859, -0.00670817, 0.12686075, -0.19326553,

-0.52626437, -0.3295823 , 0.14394785, 0.09043556, -0.5417555 ,

0.02468163, -0.15456742, 0.68333143, 0.09068331, -0.45327246,

0.23180096, -0.8615696 , 0.34480393, 0.12838456, -0.58759046,

-0.4071231 , 0.23061076, 0.48426893, -0.27128142, -0.5380916 ,

0.47016326, 0.22572741, -0.00830663, 0.2846242 , -0.304985 ,

0.04400365, 0.25025874, 0.14867121, 0.40717036, -0.15422426,

-0.06878027, -0.40825695, -0.3149215 , 0.09283665, -0.20183425],

[ 0.7456154 , 0.21256861, 0.14400336, 0.5233862 , 0.11032254,

0.00902788, -0.3667802 , -0.08938274, -0.24165542, 0.33384594,

-0.11194605, -0.01460047, -0.0071645 , 0.19562712, 0.00685216,

-0.24886718, -0.42796347, 0.18620004, -0.05241098, -0.66462487,

0.13449019, -0.22205497, 0.08633006, 0.43685386, 0.2972681 ,

0.36140734, -0.7196889 , 0.05291241, -0.14316116, -0.1573394 ,

-0.15056328, -0.05988009, -0.08178931, -0.15569411, -0.09303783,

-0.18971172, 0.07620788, -0.02541647, -0.27134508, -0.3392682 ,

-0.10296468, -0.27275252, -0.34078008, 0.20083304, -0.26644835,

0.00655449, -0.05141488, -0.04261917, -0.45413622, 0.20023568]],

dtype=float32)>

现在让我们构建完整的模型

model = tf.keras.Sequential()

model.add(hub_layer)

model.add(tf.keras.layers.Dense(16, activation='relu'))

model.add(tf.keras.layers.Dense(1))

model.summary()

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

keras_layer (KerasLayer) (None, 50) 48190600

dense (Dense) (None, 16) 816

dense_1 (Dense) (None, 1) 17

=================================================================

Total params: 48191433 (183.84 MB)

Trainable params: 48191433 (183.84 MB)

Non-trainable params: 0 (0.00 Byte)

_________________________________________________________________

层按顺序堆叠以构建分类器

- 第一层是 TensorFlow Hub 层。此层使用预训练的 Saved Model 将句子映射到其嵌入向量。我们使用的模型(google/nnlm-en-dim50/2)将句子拆分为标记,嵌入每个标记,然后组合嵌入。结果维度为:

(num_examples, embedding_dimension)。 - 此固定长度的输出向量通过具有 16 个隐藏单元的全连接(

Dense)层传递。 - 最后一层是与单个输出节点密集连接的。这输出 logits:根据模型,真实类的对数几率。

隐藏单元

以上模型有两个中间层或“隐藏层”,位于输入层和输出层之间。输出的数量(单元、节点或神经元)是该层表示空间的维度。换句话说,网络在学习内部表示时允许的自由度。

如果模型有更多隐藏单元(更高维度的表示空间)和/或更多层,那么网络可以学习更复杂的表示。但是,这会使网络的计算成本更高,并且可能导致学习不必要的模式——这些模式可以提高训练数据的性能,但不能提高测试数据的性能。这被称为*过拟合*,我们将在后面探讨。

损失函数和优化器

模型需要损失函数和优化器进行训练。由于这是一个二元分类问题,并且模型输出概率(具有 sigmoid 激活函数的单单元层),我们将使用binary_crossentropy损失函数。

这不是损失函数的唯一选择,例如,您可以选择mean_squared_error。但是,通常情况下,binary_crossentropy更适合处理概率——它衡量概率分布之间的“距离”,或者在我们的例子中,衡量真实分布和预测之间的距离。

稍后,当我们探索回归问题(例如,预测房价)时,我们将看到如何使用另一个称为均方误差的损失函数。

现在,配置模型以使用优化器和损失函数

model.compile(optimizer='adam',

loss=tf.losses.BinaryCrossentropy(from_logits=True),

metrics=[tf.metrics.BinaryAccuracy(threshold=0.0, name='accuracy')])

创建验证集

在训练时,我们希望检查模型在以前从未见过的数据上的准确性。通过从原始训练数据中分离出 10,000 个示例来创建一个*验证集*。(为什么现在不使用测试集?我们的目标是仅使用训练数据来开发和调整模型,然后仅使用测试数据一次来评估我们的准确性)。

x_val = train_examples[:10000]

partial_x_train = train_examples[10000:]

y_val = train_labels[:10000]

partial_y_train = train_labels[10000:]

训练模型

以 512 个样本的小批量训练模型 40 个 epoch。这是对x_train和y_train张量中所有样本进行 40 次迭代。在训练期间,监控模型在验证集中 10,000 个样本上的损失和准确性

history = model.fit(partial_x_train,

partial_y_train,

epochs=40,

batch_size=512,

validation_data=(x_val, y_val),

verbose=1)

Epoch 1/40 30/30 [==============================] - 22s 710ms/step - loss: 0.6627 - accuracy: 0.6301 - val_loss: 0.6155 - val_accuracy: 0.7314 Epoch 2/40 30/30 [==============================] - 21s 710ms/step - loss: 0.5568 - accuracy: 0.7823 - val_loss: 0.5170 - val_accuracy: 0.7844 Epoch 3/40 30/30 [==============================] - 21s 712ms/step - loss: 0.4290 - accuracy: 0.8513 - val_loss: 0.4133 - val_accuracy: 0.8382 Epoch 4/40 30/30 [==============================] - 21s 708ms/step - loss: 0.3133 - accuracy: 0.8981 - val_loss: 0.3504 - val_accuracy: 0.8575 Epoch 5/40 30/30 [==============================] - 21s 713ms/step - loss: 0.2312 - accuracy: 0.9267 - val_loss: 0.3194 - val_accuracy: 0.8673 Epoch 6/40 30/30 [==============================] - 21s 707ms/step - loss: 0.1734 - accuracy: 0.9482 - val_loss: 0.3065 - val_accuracy: 0.8712 Epoch 7/40 30/30 [==============================] - 21s 709ms/step - loss: 0.1288 - accuracy: 0.9655 - val_loss: 0.3014 - val_accuracy: 0.8740 Epoch 8/40 30/30 [==============================] - 21s 707ms/step - loss: 0.0946 - accuracy: 0.9792 - val_loss: 0.3034 - val_accuracy: 0.8759 Epoch 9/40 30/30 [==============================] - 21s 708ms/step - loss: 0.0691 - accuracy: 0.9877 - val_loss: 0.3098 - val_accuracy: 0.8754 Epoch 10/40 30/30 [==============================] - 21s 710ms/step - loss: 0.0501 - accuracy: 0.9938 - val_loss: 0.3199 - val_accuracy: 0.8747 Epoch 11/40 30/30 [==============================] - 21s 708ms/step - loss: 0.0367 - accuracy: 0.9969 - val_loss: 0.3313 - val_accuracy: 0.8751 Epoch 12/40 30/30 [==============================] - 21s 707ms/step - loss: 0.0268 - accuracy: 0.9986 - val_loss: 0.3430 - val_accuracy: 0.8734 Epoch 13/40 30/30 [==============================] - 21s 710ms/step - loss: 0.0202 - accuracy: 0.9992 - val_loss: 0.3553 - val_accuracy: 0.8714 Epoch 14/40 30/30 [==============================] - 21s 704ms/step - loss: 0.0154 - accuracy: 0.9995 - val_loss: 0.3682 - val_accuracy: 0.8723 Epoch 15/40 30/30 [==============================] - 21s 710ms/step - loss: 0.0121 - accuracy: 0.9999 - val_loss: 0.3787 - val_accuracy: 0.8717 Epoch 16/40 30/30 [==============================] - 21s 710ms/step - loss: 0.0097 - accuracy: 0.9999 - val_loss: 0.3881 - val_accuracy: 0.8699 Epoch 17/40 30/30 [==============================] - 21s 708ms/step - loss: 0.0079 - accuracy: 0.9999 - val_loss: 0.3984 - val_accuracy: 0.8697 Epoch 18/40 30/30 [==============================] - 21s 708ms/step - loss: 0.0066 - accuracy: 0.9999 - val_loss: 0.4079 - val_accuracy: 0.8700 Epoch 19/40 30/30 [==============================] - 21s 708ms/step - loss: 0.0055 - accuracy: 1.0000 - val_loss: 0.4158 - val_accuracy: 0.8692 Epoch 20/40 30/30 [==============================] - 21s 707ms/step - loss: 0.0047 - accuracy: 1.0000 - val_loss: 0.4252 - val_accuracy: 0.8692 Epoch 21/40 30/30 [==============================] - 21s 709ms/step - loss: 0.0041 - accuracy: 1.0000 - val_loss: 0.4321 - val_accuracy: 0.8687 Epoch 22/40 30/30 [==============================] - 21s 706ms/step - loss: 0.0035 - accuracy: 1.0000 - val_loss: 0.4393 - val_accuracy: 0.8685 Epoch 23/40 30/30 [==============================] - 21s 708ms/step - loss: 0.0031 - accuracy: 1.0000 - val_loss: 0.4461 - val_accuracy: 0.8682 Epoch 24/40 30/30 [==============================] - 21s 708ms/step - loss: 0.0027 - accuracy: 1.0000 - val_loss: 0.4538 - val_accuracy: 0.8681 Epoch 25/40 30/30 [==============================] - 21s 712ms/step - loss: 0.0024 - accuracy: 1.0000 - val_loss: 0.4592 - val_accuracy: 0.8668 Epoch 26/40 30/30 [==============================] - 21s 715ms/step - loss: 0.0022 - accuracy: 1.0000 - val_loss: 0.4659 - val_accuracy: 0.8671 Epoch 27/40 30/30 [==============================] - 21s 716ms/step - loss: 0.0020 - accuracy: 1.0000 - val_loss: 0.4713 - val_accuracy: 0.8668 Epoch 28/40 30/30 [==============================] - 21s 711ms/step - loss: 0.0018 - accuracy: 1.0000 - val_loss: 0.4767 - val_accuracy: 0.8662 Epoch 29/40 30/30 [==============================] - 21s 714ms/step - loss: 0.0016 - accuracy: 1.0000 - val_loss: 0.4828 - val_accuracy: 0.8663 Epoch 30/40 30/30 [==============================] - 21s 711ms/step - loss: 0.0015 - accuracy: 1.0000 - val_loss: 0.4876 - val_accuracy: 0.8659 Epoch 31/40 30/30 [==============================] - 21s 714ms/step - loss: 0.0014 - accuracy: 1.0000 - val_loss: 0.4926 - val_accuracy: 0.8658 Epoch 32/40 30/30 [==============================] - 21s 711ms/step - loss: 0.0013 - accuracy: 1.0000 - val_loss: 0.4975 - val_accuracy: 0.8659 Epoch 33/40 30/30 [==============================] - 21s 711ms/step - loss: 0.0012 - accuracy: 1.0000 - val_loss: 0.5021 - val_accuracy: 0.8658 Epoch 34/40 30/30 [==============================] - 21s 710ms/step - loss: 0.0011 - accuracy: 1.0000 - val_loss: 0.5066 - val_accuracy: 0.8655 Epoch 35/40 30/30 [==============================] - 21s 711ms/step - loss: 0.0010 - accuracy: 1.0000 - val_loss: 0.5112 - val_accuracy: 0.8654 Epoch 36/40 30/30 [==============================] - 21s 709ms/step - loss: 9.3126e-04 - accuracy: 1.0000 - val_loss: 0.5151 - val_accuracy: 0.8654 Epoch 37/40 30/30 [==============================] - 21s 711ms/step - loss: 8.7043e-04 - accuracy: 1.0000 - val_loss: 0.5191 - val_accuracy: 0.8654 Epoch 38/40 30/30 [==============================] - 21s 717ms/step - loss: 8.1289e-04 - accuracy: 1.0000 - val_loss: 0.5236 - val_accuracy: 0.8646 Epoch 39/40 30/30 [==============================] - 21s 717ms/step - loss: 7.6191e-04 - accuracy: 1.0000 - val_loss: 0.5278 - val_accuracy: 0.8649 Epoch 40/40 30/30 [==============================] - 21s 717ms/step - loss: 7.1471e-04 - accuracy: 1.0000 - val_loss: 0.5310 - val_accuracy: 0.8648

评估模型

让我们看看模型的表现如何。将返回两个值。损失(一个代表我们误差的数字,值越低越好)和准确性。

results = model.evaluate(test_examples, test_labels)

print(results)

782/782 [==============================] - 143s 183ms/step - loss: 0.5944 - accuracy: 0.8462 [0.5943577885627747, 0.8462399840354919]

这种相当简单的做法实现了大约 87% 的准确率。使用更高级的方法,模型应该更接近 95% 的准确率。

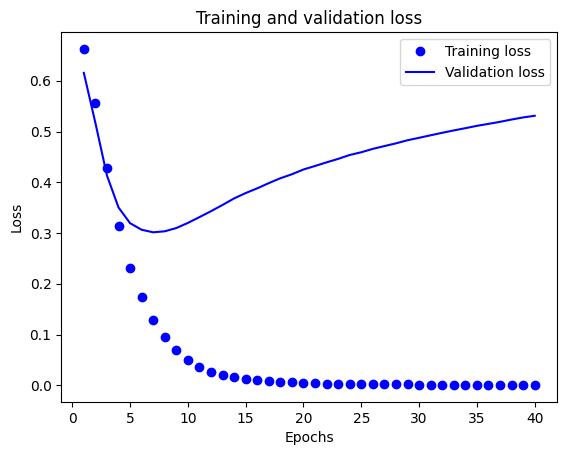

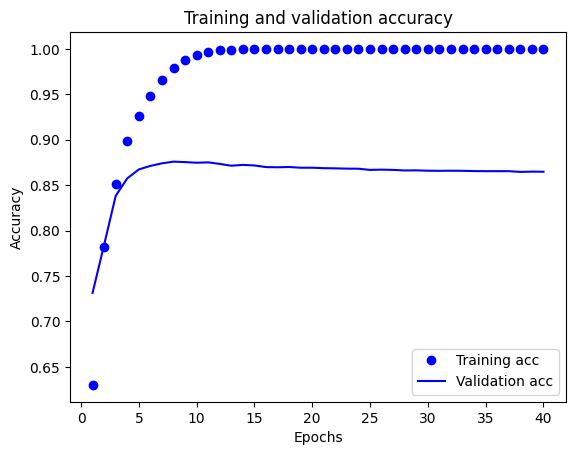

创建准确率和损失随时间变化的图表

model.fit()返回一个History对象,该对象包含一个字典,其中包含训练期间发生的所有事情

history_dict = history.history

history_dict.keys()

dict_keys(['loss', 'accuracy', 'val_loss', 'val_accuracy'])

有四个条目:一个用于训练和验证期间监控的每个指标。我们可以使用这些来绘制训练和验证损失以进行比较,以及训练和验证准确性

acc = history_dict['accuracy']

val_acc = history_dict['val_accuracy']

loss = history_dict['loss']

val_loss = history_dict['val_loss']

epochs = range(1, len(acc) + 1)

# "bo" is for "blue dot"

plt.plot(epochs, loss, 'bo', label='Training loss')

# b is for "solid blue line"

plt.plot(epochs, val_loss, 'b', label='Validation loss')

plt.title('Training and validation loss')

plt.xlabel('Epochs')

plt.ylabel('Loss')

plt.legend()

plt.show()

plt.clf() # clear figure

plt.plot(epochs, acc, 'bo', label='Training acc')

plt.plot(epochs, val_acc, 'b', label='Validation acc')

plt.title('Training and validation accuracy')

plt.xlabel('Epochs')

plt.ylabel('Accuracy')

plt.legend()

plt.show()

在这个图中,点代表训练损失和准确性,实线代表验证损失和准确性。

请注意,训练损失在每个 epoch 中都*减少*,训练准确性在每个 epoch 中都*增加*。当使用梯度下降优化时,这是预期的——它应该在每次迭代中最小化所需的值。

验证损失和准确性并非如此——它们似乎在大约 20 个 epoch 后达到峰值。这是一个过拟合的例子:模型在训练数据上的表现比在从未见过的数据上的表现更好。在此之后,模型过度优化并学习了*特定于*训练数据的表示,这些表示不能*泛化*到测试数据。

对于这种情况,我们可以通过简单地在 20 个 epoch 左右停止训练来防止过拟合。稍后,您将看到如何使用回调自动执行此操作。

# MIT License

#

# Copyright (c) 2017 François Chollet # IGNORE_COPYRIGHT: cleared by OSS licensing

#

# Permission is hereby granted, free of charge, to any person obtaining a

# copy of this software and associated documentation files (the "Software"),

# to deal in the Software without restriction, including without limitation

# the rights to use, copy, modify, merge, publish, distribute, sublicense,

# and/or sell copies of the Software, and to permit persons to whom the

# Software is furnished to do so, subject to the following conditions:

#

# The above copyright notice and this permission notice shall be included in

# all copies or substantial portions of the Software.

#

# THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

# IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

# FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL

# THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

# LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING

# FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER

# DEALINGS IN THE SOFTWARE.